Running a CMS on Serverless Infrastructure: Scale to Zero Without Traditional Hosting

A serverless CMS architecture changes the economics of idle capacity, burst traffic, and platform operations in ways that traditional hosting models rarely match cleanly.

Traditional CMS hosting assumes that someone is always paying for waiting.

Even when traffic is quiet, infrastructure stays provisioned. Even when performance is mostly fine, teams still spend time thinking about instance sizing, cache layers, failover, and traffic spikes.

That operational shape made sense for an earlier web. It is a weaker fit for modern publishing infrastructure.

What “scale to zero” changes

The phrase sounds like a cost optimization, but the real benefit is broader than that.

A scale-to-zero architecture changes how you think about:

- idle environments

- long-tail sites

- bursty traffic

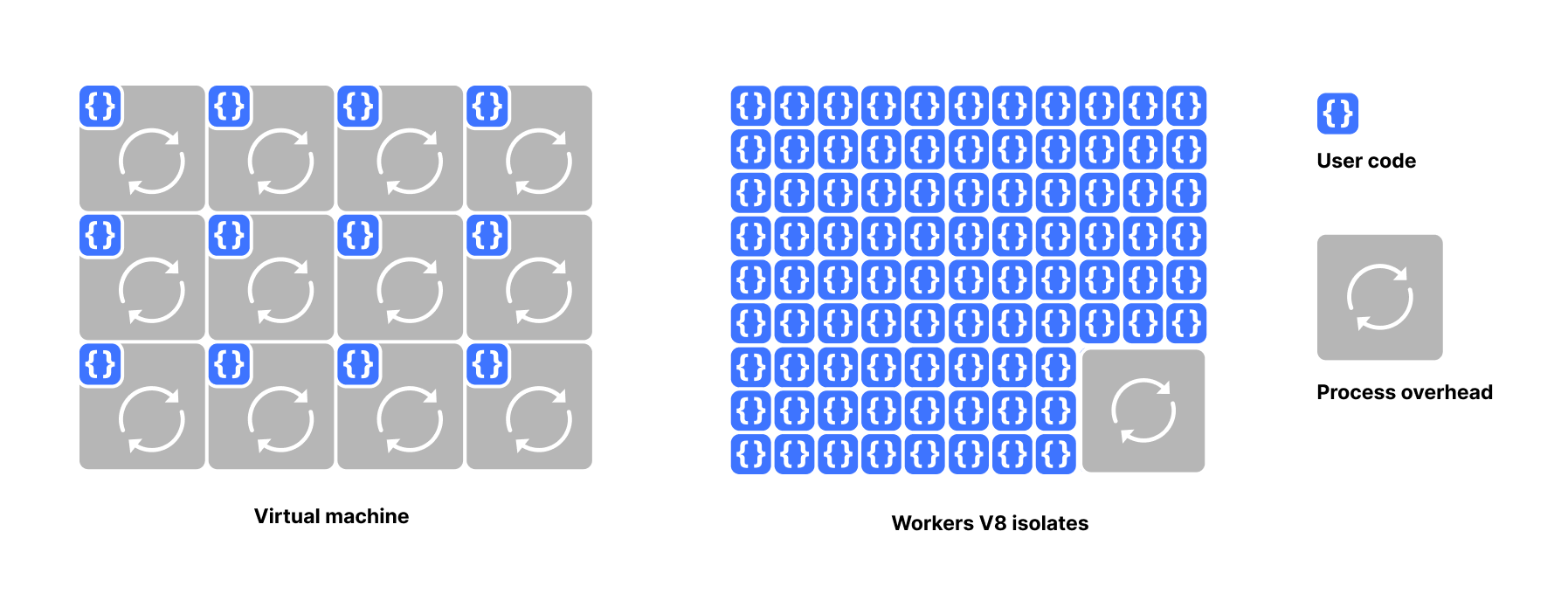

- platform multi-tenancy

- preview and experimentation surfaces

If a CMS can run without forcing you to keep idle capacity warm, the economics of hosting a lot of sites look very different.

Why this matters for publishing platforms

CMS traffic is often uneven. Some sites are quiet for long stretches and then spike hard because of a launch, a campaign, or a social burst.

A traditional stack handles that with some mix of over-provisioning, shared contention, or complex tuning. A serverless model is a better match when you want the system to react to traffic instead of constantly anticipating it.

Why this fits EmDash

EmDash is designed with a stronger serverless story than WordPress. That matters for:

- platform operators hosting many sites

- teams that want lower idle cost

- projects that want cleaner scaling behavior

- organizations that prefer managed infrastructure primitives over server babysitting

This does not mean every site must be deployed the same way. It means the architecture is aligned with modern deployment expectations rather than fighting them.

What teams should still plan for

Serverless does not erase operational thinking. You still need to care about:

- cache strategy

- asset delivery

- background workflows

- data locality

- observability

But these are better problems than spending your energy on keeping underutilized compute alive just in case traffic arrives.

The practical argument

The best reason to run a CMS on serverless infrastructure is not hype. It is that publishing systems usually benefit from flexible compute far more than they benefit from permanently reserved compute.

That is the core advantage. You pay more attention to the work the system is doing and less attention to the machinery waiting around it.